Access full report

Oops! Something went wrong while submitting the form.

Facilitated by The Modern Data Company in collaboration with the Modern Data 101 Community

Latest reads...

TABLE OF CONTENT

For years, organisations have tried to capture their business in a single, coherent model. In practice, every attempt ends up being only a partial representation because the business changes faster than the model can. Still, some form of structure is unavoidable.

Data has no practical value until it is organised, interpreted, and tied to real business meaning. Whether structure is defined upfront or inferred later, companies can only extract insight when relationships, definitions, and context are explicit. Without that foundation, data environments quickly lose clarity and become unusable.

This is the basic transition every enterprise faces: moving from raw data to information that can be understood, linked, and acted upon. Just as a reader builds a mental model while processing an unstructured text, organisations need a systematic way to interpret their data before any intelligence, human or machine, can be applied.

🎢 Dataversity reports that 64% organisations are actively using data modeling, and it’s going to increase with time.

This article will navigate the value of data modeling for enterprises and how it drives AI readiness in today’s business ecosystems.

[state-of-data-products]

A data model is the semantic blueprint of your business, expressed in data that defines the meaning of key concepts, customers, transactions, products, events, and how they relate to each other. Where pipelines move data and storage systems hold data, the data model explains what the data represents.

✨IBM defines data modeling as: the process of creating a visual representation of either a whole information system or parts of it to communicate connections between data points and structures.

A good data model creates a shared language between teams. It standardises definitions, clarifies relationships, and ensures that “customer,” “revenue,” or “churn” mean the same thing everywhere. Without that shared understanding, every dashboard, product feature, and ML model ends up reinventing the truth.

[related-1]

An enterprise data model(EDM) offers shared semantics, common structures, canonical definitions, and governed standards, used across operational and analytical systems.

Increasingly, enterprises also need a semantic layer to unify models across AI, BI, federated systems, and data products. As a result, it becomes the backbone of discoverability, interoperability, and machine reasoning.

[data-expert]

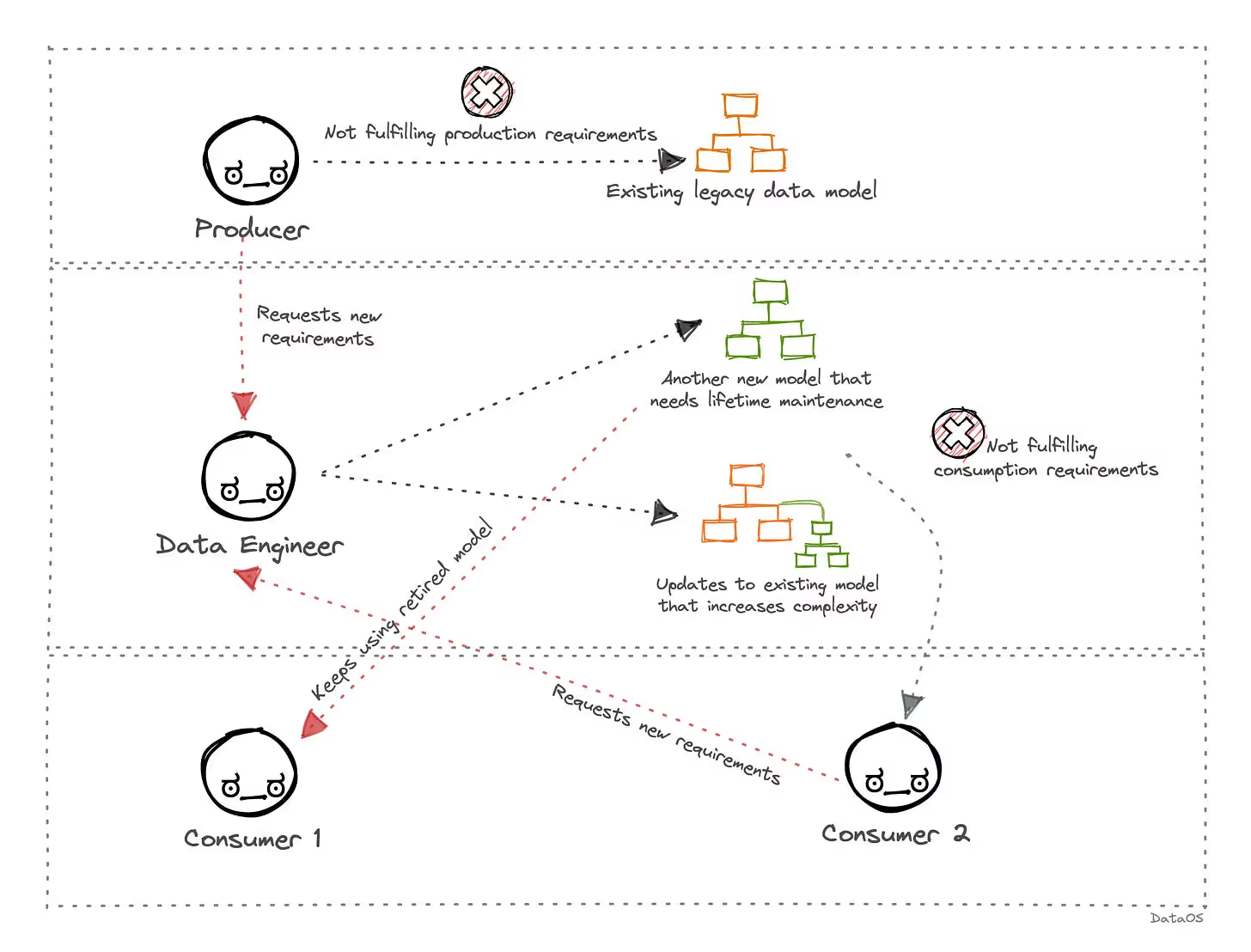

For the record, poor data modeling techniques can really take efficiency out of the scenario, creating a divide between data producers, consumers, and engineers.

That’s exactly why, from a strategic perspective, there are a lot of positives for enterprises placing data modeling at the core of their capability stack.

Robust data modeling enforces validation rules, standards, and the identification of golden sources. These structures tend to reduce ambiguity, boost data quality, and ensure that analytical and AI systems work with trustworthy data.

Given that predictive AI heavily relies on stable and well-structured inputs, enterprise modeling becomes an essential prerequisite for reliable automation and risk reduction.

[related-2]

AI systems, from predictive models, autonomous agents, or LLMs, benefit from structured and well-governed pipelines. Enterprise models form the foundation of knowledge graphs, vector-enabled semantic search, entity embeddings, model context protocols (MCPs).

These representations allow AI systems to reason across entities such as products, customers, suppliers, transactions, and assets with far better accuracy. Enterprise data modeling, therefore, becomes directly linked to the AI’s ability to understand better.

Alejandro Aboy shared some great thoughts about the challenges awaiting organisations with AI-driven modeling for reduced inefficiencies in one of his community pieces:

We need solid foundations, a system that already works for us, and a system to put everything together that can help speed up our data modeling processes.

Data models take away ambiguity by shared semantics and definitions across analytics, business, engineering, and AI teams. This minimises misinterpretations for accelerated collaboration.

An enterprise model then effectively becomes the source of semantic truth, ensuring that each domain, such as supply chain, finance, operations, and marketing, operates from the same foundation.

Enterprise models offer stable and governed structures, simplifying migrations, reducing rework, and also minimising schema fragmentation. This is crucial for evergreen AI systems, requiring consistent schemas and predictable input formats.

Well-developed models mitigate future technical debt, allowing organisations to develop systems faster and with increased confidence.

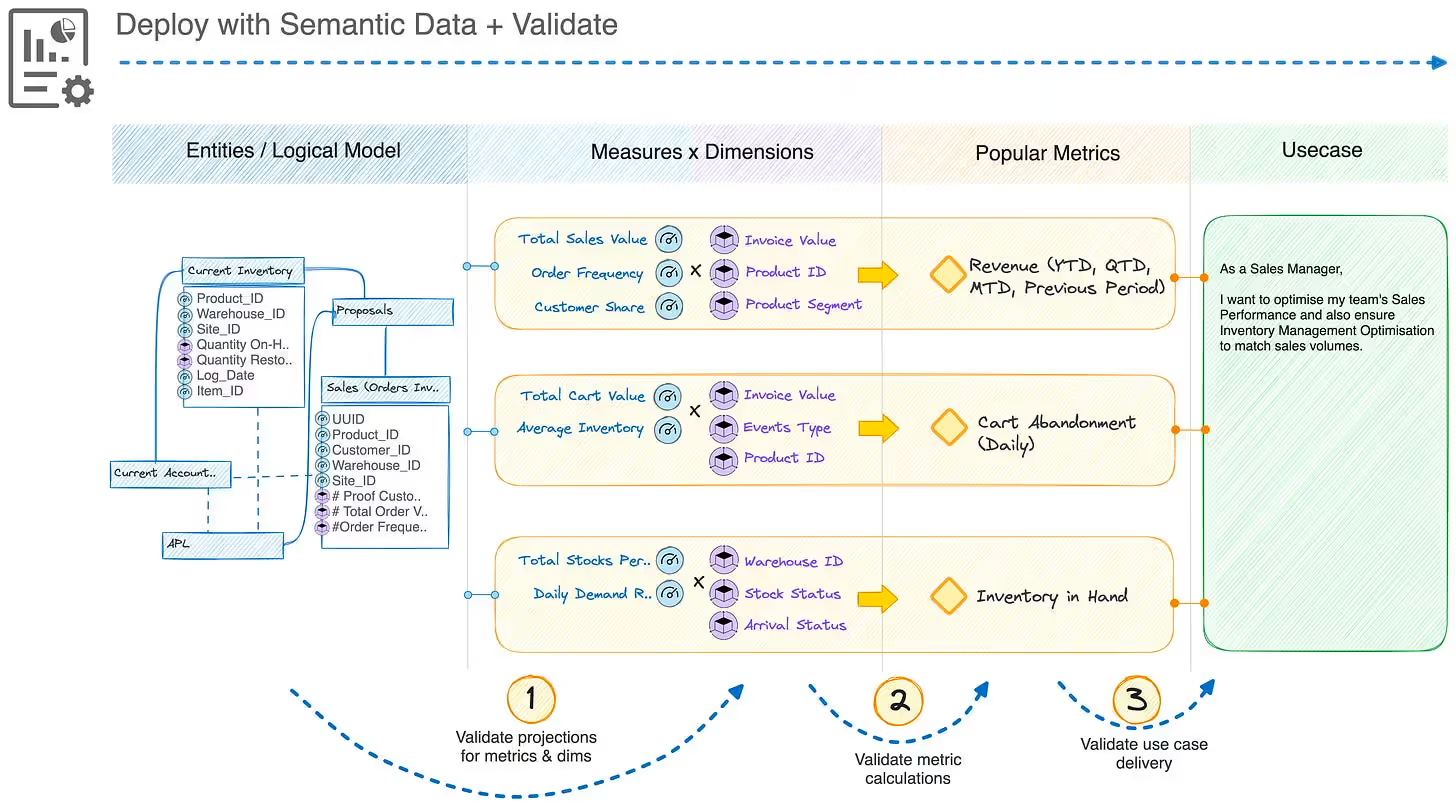

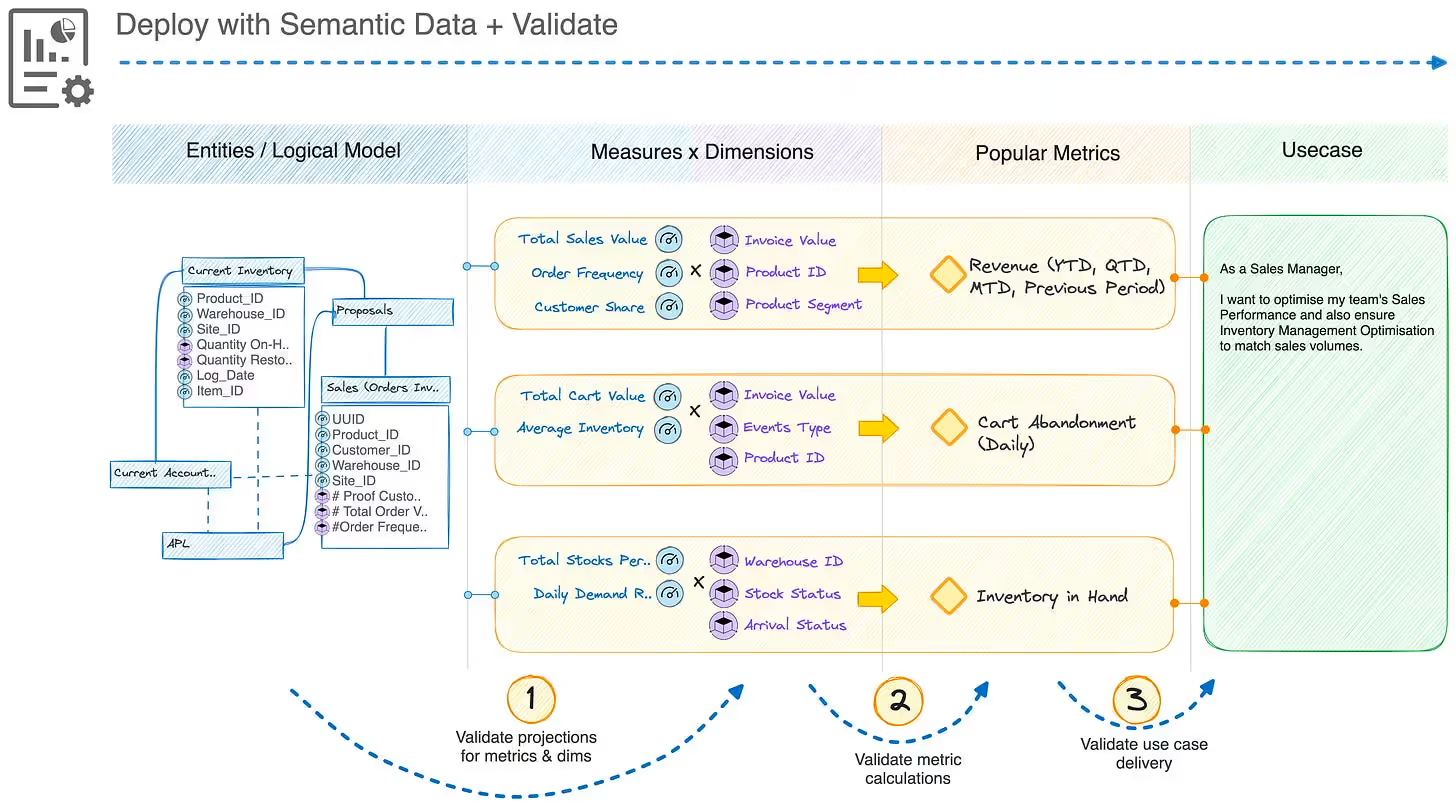

Enterprise data modeling works across multiple layers, where each layer serves a distinct purpose in translating commercial understanding into AI-ready and technically precise structures. These layers ensure that data moves beyond just simple concepts to well-governed, high-performing implementations without taking a toll on the semantic consistency.

The conceptual layer captures a commercial organisational view. It defines essential entities, business policies, domain boundaries, and high-level relationships. This layer aligns stakeholders with each other, establishes a shared vocabulary, and also anchors data products and AI systems in real commercial semantics.

The logical layer brings all the rules, structure, and constraints while also remaining technology-agnostic. It includes normalising and decomposing entities, detailing relationships and attributes, establishing identifiers, keys, and integrity constraints, and incorporating embedded business rules. These elements operationalise business meaning into a rigorous, unambiguous schema that’s well-suited for analytics and governance.

The physical layer transforms the logical design into a platform-specific implementation. It defines all the tables, column types, and indexes, as well as the strategies for partitioning and clustering, along with performance and optimisation considerations. This is where the conceptual model shifts to a production-ready artefact, suited for cloud lakehouses, warehouses, document stores, or vector databases.

Beyond traditional modeling, enterprise data today also requires a semantic layer to support AI, decision intelligence, search, and agentic workflows. This includes BI models and metrics layers, taxonomies and ontologies, entity models, knowledge graphs and canonical vocabularies, as well as embeddings and vector representations. These structures give AI systems the relationships, context, and meaning they need to reason effectively with enterprise data.

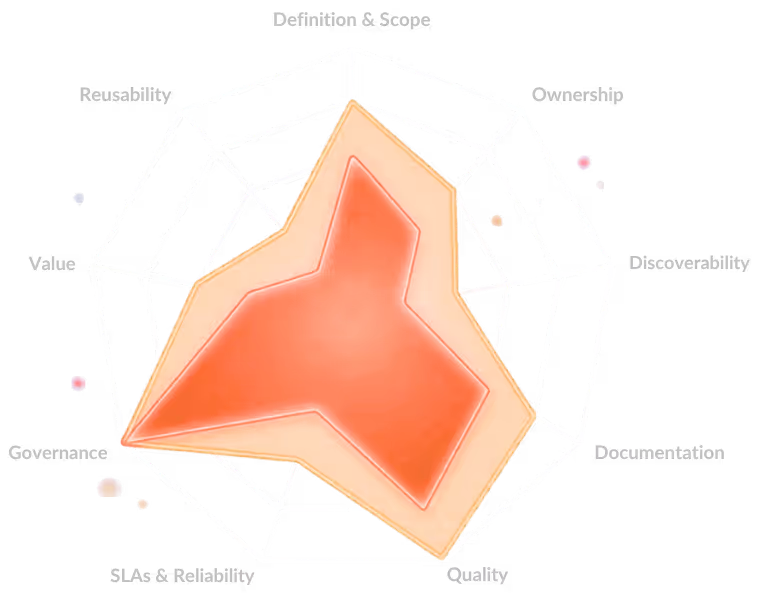

Enterprise data models underpin a vital discipline:

That of designing data before building the product. Model-first thinking ensures that each data product starts with clearly-defined entities, ownership boundaries, relationships, semantics, usage expectations, and SLAs.

A well-governed enterprise model becomes the schema contract for every data product, informing APIs, implementing the transformation logic, looking after versioning, and taking care of downstream requirements for consumption.

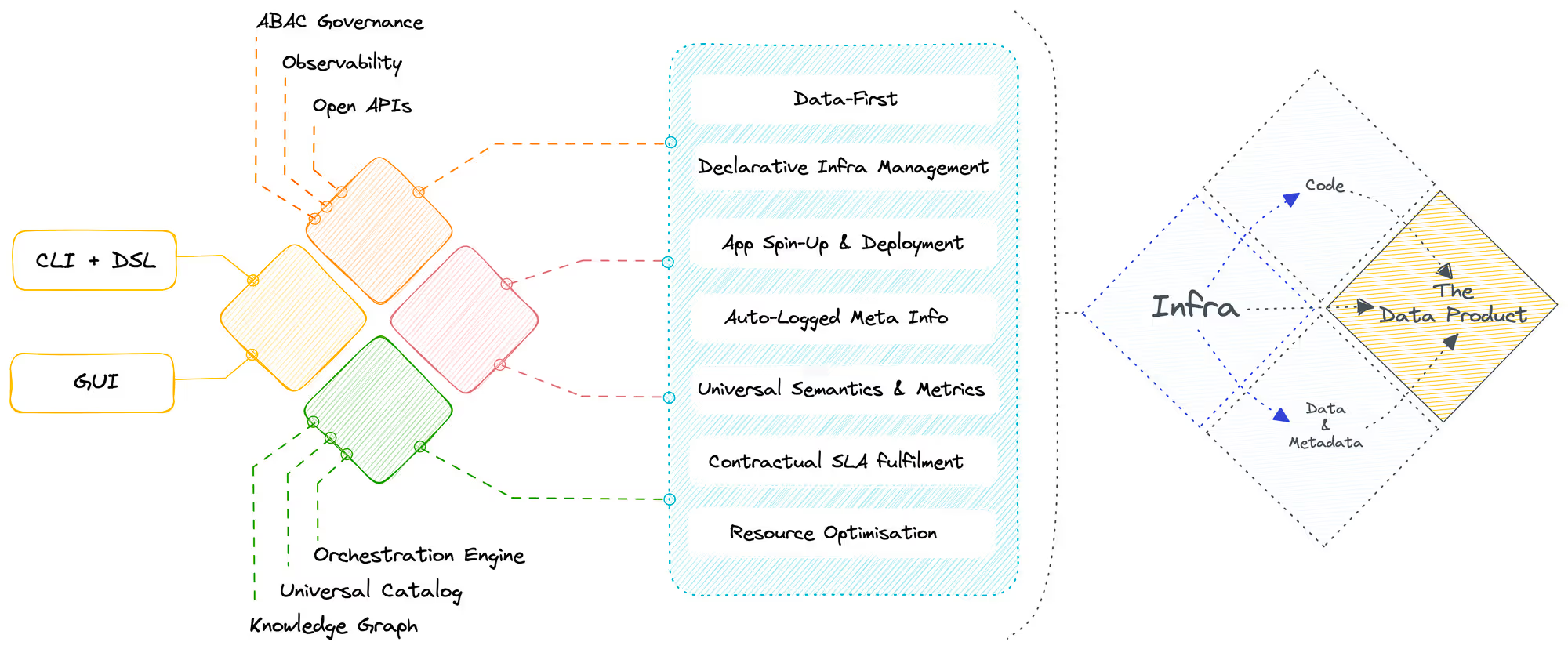

Modern Data Developer Platforms strengthen this model-first workflow with some spectacular features, such as automated schema inference, reverse engineering, lineage extraction, and semantic validation, ensuring consistency that’s aligned with how teams scale as well.

Standardised enterprise models offer interoperability by cutting down fragmentation, delivering standard definitions for domains like product, customer, supplier, or asset. They also provide consistent interfaces, APIs, metadata structures, and naming conventions to drive seamless integration across clouds and systems.

In a Data Developer Platform, these shared models enhance federation, discoverability, and composability. This is made possible as AI agents and developers can easily locate canonical entities, trust definitions, understand lineage, and then quickly assemble new data products from model-aligned blocks.

Enterprise models need to shift from static documentation into AI-ready semantic assets that support automation, reasoning, and contextual understanding. Platforms enrich them with lineage mapping, entity resolution, business glossaries, and domain-specific semantics, turning models into knowledge structures that AI agents and copilots can actually use.

Supply Chain Knowledge Graphs, Customer 360 models, and Product Catalog ontologies are some of the practical examples: they give AI the context needed to automate tasks, generate insights, and orchestrate data workflows.

AI-native capabilities like LLM-assisted definition harmonisation, duplication detection, and canonical model generation further reduce modelling debt while accelerating governance.

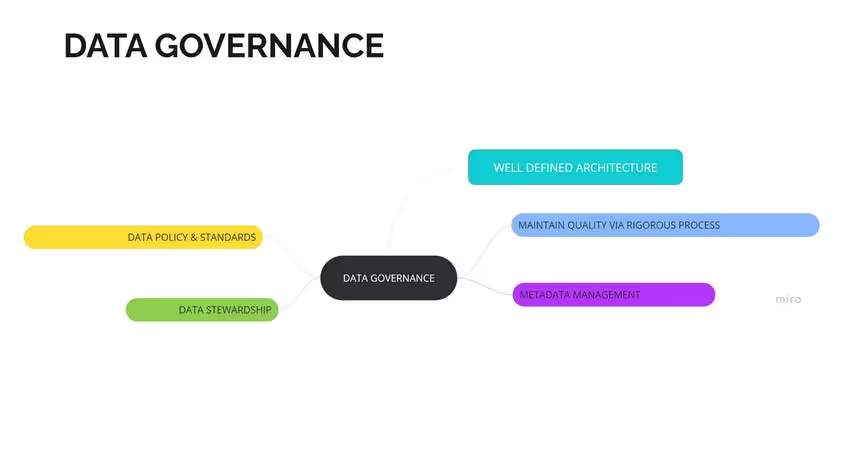

Data governance works only when the underlying data structures are consistent, transparent, and well-defined. Data modeling provides the proper foundation, embedding rules, meaning, as well as relationships into the very fabric of organisational data.

In today’s AI-driven ecosystems where data flows across products, agents, and systems, governance should be scalable, context-aware, and automated. This is made possible with enterprise data models.

Effective governance starts with a clear lineage, shared definitions, and explicit business rules. Enterprise data modeling encodes these elements right into data asset design, ensuring that each table, relationship, and entity is governed more by intent than just interpretation.

Modeling makes data governance embedded, structural, and consistently enforced, instead of being a separate layer bolted on later.

Well-designed enterprise data modeling also acts as a scaffolding for metadata-based governance. When these models define sensitivity levels, classifications, relationships, and usage contexts, the platform can automate a large chunk of the entire governance process.

Data models provide the foundations for metadata and classification, policy automation, lineage-based controls, and enforcing policies based on where data originated and how it has transformed.

This also supports fine-grained access and regulated data flows, ensuring that data products cater to industry-defined compliance needs, such as GDPR, HIPAA, and the like.

This transforms governance from just a manual oversight into automated, model-driven enforcement, allowing enterprises to scale AI systems and trustworthy data without increasing operational challenges.

With a consistent foundation, models ensure that analytical systems, machine learning workflows, and AI agents operate on structured, governed, and meaningful data.

[related-3]

The future of data modeling will be defined by whether organisations can eliminate ambiguity at the source. Buyers of insights, whether internal or external, will no longer tolerate models that mask inconsistencies, hide technical debt, or rely on tribal definitions. A model that cannot guarantee semantic clarity, like shared meaning, stable definitions, and predictable behaviour, will fail long before data quality even becomes a topic.

The pressure for trustworthy, interoperable data will expose modelling shortcuts the same way stale data exposes operational gaps.

The primary pillars for making your data modelling approach future-ready are:

A future-proof data model should start with one principle: it must serve business goals, not just technical preferences. Models that optimise for elegance instead of outcomes age quickly. The organisations that thrive are the ones that align modelling decisions to revenue goals, customer outcomes, and the metrics that actually drive the business. When the model reflects how the business creates value, it stays relevant even as tools, teams, and architectures change.

Static, rigid models slow down experimentation and force teams into long lead times before any question can be answered. Future-proofing means designing structures that minimise friction, like clear domains, stable definitions, and patterns for integrating new data without rewriting everything upstream. The shorter the path from raw data to trusted insight, the more adaptable the organisation becomes.

Whether launching new features, entering new markets, or monetising data, speed depends on how flexible the underlying model is. If every new use case requires reworking core schemas, the model becomes a bottleneck. But if the model is built around durable business concepts, with well-defined extension points for new products or signals, teams can move without waiting for a modelling overhaul. Governance embedded early keeps velocity high without compromising trust.

Far too often, governance is added after the fact, creating bottlenecks. A future-proof model bakes governance into the design, with elements like consent rules, access controls, and policy constraints becoming part of the modelling pattern, not an overlay. This reduces risk while increasing speed, because compliant-by-default data can move without constant manual checks or approvals.

Quality breaks when definitions drift, sources proliferate without lineage, or logic is embedded in downstream transformations instead of upstream models. A resilient model makes quality visible: clear ownership, transparent lineage, and definitions that don’t change subtly with each new feature request. When quality is embedded at the modelling layer, trust scales with the organisation instead of eroding as data volume grows.

Data modeling has always been foundational for organising data and making data usable by both humans and machines at enterprise scale.

Organisations that treat modeling as a strategic discipline, embedding governance, aligning to business outcomes, and building for AI readiness, will move faster, trust their data more, and build systems that hold up as complexity grows. Organisations that fail to do so, will have higher risk factors of paying the hidden tax of ambiguity: in rework, in broken and hallucinating AI outputs, and inefficient decision making.

Data models enhance AI by giving consistent, structured, and semantically rich data that reduces ambiguity, boosts feature engineering, and also improves model reliability. They provide a clear context to AI agents for accurate predictions and reasoning.

Data modeling provides a foundation for data products by clearly defining relationships, structures, and other related business rules. Modelling also ensures interoperability, consistency, and quality, driving data products to stay reusable, reliable, AI-ready, and aligned with enterprise governance and other domain requirements.

Many enterprise data modeling initiatives fail because most of the times, they just stay documentation activities, completely disconnected from actual delivery flows. Without proper integration with governance automation, data products, and AI pipelines, these models often get outdated. When modeling is embedded into product lifecycles and platforms, it ensures adoption, relevance, and measurable business impact.

Find more community resources

Modern Data 101 is a movement redefining how the world thinks about data. A community built by the same team behind the world’s first data operating system, Modern Data 101 sits at the intersection of data, product thinking, and AI. Spread across 150+ countries, the community brings together a global network of practitioners, architects, and leaders who are actively building the next generation of data systems.

At its core, Modern Data 101 exists to simplify the journey from raw data to tangible and observable impact. It advocates high-potential data systems and next-gen architectures to unify and activate insights and automation across analytics, applications, and operational workflows at the edge.

In a world shifting from data stacks to AI ecosystems, Modern Data 101 helps teams not just navigate the change but lead it.

Find all things data products, be it strategy, implementation, or a directory of top data product experts & their insights to learn from.

Connect with the minds shaping the future of data. Modern Data 101 is your gateway to share ideas and build relationships that drive innovation.

Showcase your expertise and stand out in a community of like-minded professionals. Share your journey, insights, and solutions with peers and industry leaders.