Access full report

Oops! Something went wrong while submitting the form.

Facilitated by The Modern Data Company in collaboration with the Modern Data 101 Community

Latest reads...

TABLE OF CONTENT

The weight of data is on a much higher end today across industries, with manufacturing sectors also noticing a huge volume being generated.

Sensors embedded in CNC machines, robotic arms, conveyor belts, and environmental systems collectively produce millions of data points per hour. For manufacturers racing toward AI-driven operations, such as predictive maintenance, real-time quality control, and autonomous scheduling, data is the raw material of competitive advantage. But how that data is collected, stored, governed, and served to AI models is a strategic choice with long-term consequences.

Two dominant philosophies that find mention in multiple debates today are the Modern Data Stack (MDS) and the Unified Data Platform (UDP). Understanding the difference and which one to opt for is critical to manufacturing leaders for sustainable growth.

The Modern Data Stack emerged as a best-of-breed ecosystem. You pick specialised tools for ingestion, transformation, orchestration, storage, and visualisation, where each is optimised for a specific function.

A typical MDS in a manufacturing context might combine a streaming ingestion layer, a cloud data warehouse, a transformation layer, and a business intelligence front-end. Each component is selected for its excellence in a particular domain, and the assembly is glued together by data pipelines.

[playbook]

This approach offers flexibility. Teams can adopt cutting-edge tools and scale components independently. For early-stage analytics use cases, like monitoring production KPIs or tracking downtime, this works well.

For facilities with strong IT infrastructure and specialist data engineers, the composability delivers genuine precision. But AI-driven manufacturing exposes where the architecture strains.

[related-1]

PLCs, SCADA systems, and industrial sensors speak protocols that cloud-native MDS tools were never built to handle. Every OT/IT bridge requires custom middleware. Every new machine on the floor is a new integration project.

MDS is optimised for batch processing. Even streaming pipelines accumulate latency at every handoff, including ingestion, validation, transformation, and warehouse commit. Sensor-to-inference lag can stretch to minutes. For a line running 600 units per minute, or a bearing anomaly that precedes failure by seconds, that gap is operationally fatal.

MDS is pipeline-first: transformations are tightly coupled to specific outputs. In practice, a predictive maintenance pipeline builds its own features; a quality model rebuilds similar logic independently. There is no shared feature layer. Divergence compounds over time.

MDS is pipeline-first: transformations are tightly coupled to specific outputs. In practice, a predictive maintenance pipeline builds its own features; a quality model rebuilds similar logic independently. There is no shared feature layer. Divergence compounds over time.

When an AI system recommends stopping a machine or adjusting process parameters, teams need to know why. In MDS environments, lineage is reconstructed across tools, for example, logs here, metadata there, and transformations elsewhere. Root-cause analysis becomes slow, fragmented, and unreliable.

Manufacturing environments are not static. Machines are recalibrated, sensors added, processes revised. In an MDS, every change propagates through distributed pipelines with implicit dependencies. Schema changes break downstream systems, updates require cross-team coordination, and fragility grows as the system scales.

[related-2]

In MDS environments, ownership attaches to pipelines and tools, instead of the data itself. Accountability for quality is unclear, the gap between domain experts and data teams widens, and issues take longer to resolve. In manufacturing, where domain knowledge is critical, that disconnect is costly.

In MDS environments, ownership attaches to pipelines and tools, instead of the data itself. Accountability for quality is unclear, the gap between domain experts and data teams widens, and issues take longer to resolve. In manufacturing, where domain knowledge is critical, that disconnect is costly.

[state-of-data-products]

Unified Data Platforms take a fundamentally different approach. Instead of assembling capabilities across tools, they integrate them into a cohesive system where ingestion, processing, governance, and access are inherently connected.

.png)

Operationally, this means a manufacturer can ingest real-time sensor streams, run feature engineering pipelines, train a predictive quality model, and deploy it to a production dashboard, all within one governed environment.

A UDP abstracts multiple data sources into a single, standardised connectivity interface. Instead of adding clashing sidecars for each new integration, fundamental building blocks with common philosophies and semantics handle connectivity without overlapping capabilities. New machines on the floor will connect through the same interface as enterprise systems, with no new integration project and no new middleware.

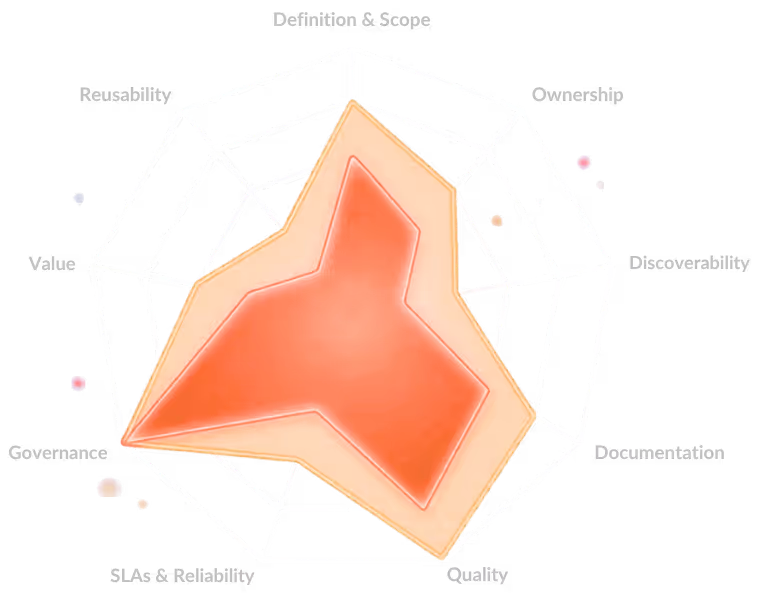

Unified Data Platforms shift the fundamental unit of work from pipelines to Data Products, which are self-contained units that bundle ingestion, transformation, metadata, governance, and SLOs together. Features engineered once inside a Data Product are reused across AI models rather than rebuilt independently for each use case. A thermal profile feature defined for predictive maintenance is immediately available to the quality model, with no scope for duplication and divergence.

A central control plane maintains complete visibility across the data ecosystem, overseeing metadata from heterogeneous polyglot sources. Lineage, provenance, and audit trails are first-class citizens of the platform. When an AI model flags a bearing anomaly or recommends a process adjustment, teams can trace the decision back to its source data instantly, within the same environment.

Unified data platforms manage change through infrastructure-as-code and declarative configuration. When something changes, higher-order solutions only lose part of their capability, and hence developers do not need to relearn unique philosophies for every solution, have lower-order solutions to roll back on, and face no integration overheads, given that components declaratively interoperate. A sensor recalibration or schema update propagates through controlled configuration, not as a silent cascade through fifteen pipelines.

Legacy MDS evaluations often centred on analytics, including dashboards, reporting, and ad-hoc queries. In that context, composability wins handily. AI-driven manufacturing shifts the centre of gravity toward operational, closed-loop intelligence: a predictive maintenance model that must act on a bearing anomaly within seconds; a computer vision system flagging a weld defect before the next assembly stage begins; a scheduling algorithm rebalancing production lines in response to a supplier delay detected in an ERP feed.

These use cases demand low latency, unified feature stores, and tight feedback loops between model inference and operational action. Unified Data Platforms are increasingly architected for exactly this workload.

AI acts as the decision layer in smart manufacturing, turning data into real-time, actionable intelligence across the factory, enabling predictive maintenance by identifying failures before they happen, and optimising production by dynamically adjusting processes. This helps improve quality through automated defect detection and enhances supply chain coordination with demand forecasting.

More importantly, AI shifts operations from reactive to adaptive, where systems continuously learn, self-correct, and improve without constant human intervention.

Most AI projects collapse under poor data quality, fragmented pipelines, unclear ownership, and a lack of production-ready infrastructure. Teams jump to building models before establishing reliable, discoverable, and governed data foundations.

There’s also a mismatch between pilots and reality: projects show promise in isolation but fail to integrate into real workflows, systems, and decision loops.

Point solutions solve narrow problems quickly but create fragmentation over time.

Integrated platforms take longer to adopt but provide consistency, shared context, and scale across use cases.

Choose point solutions for speed and experimentation. Choose integrated platforms when you need reliability, interoperability, and long-term impact.

Find more community resources

Modern Data 101 is a movement redefining how the world thinks about data. A community built by the same team behind the world’s first data operating system, Modern Data 101 sits at the intersection of data, product thinking, and AI. Spread across 150+ countries, the community brings together a global network of practitioners, architects, and leaders who are actively building the next generation of data systems.

At its core, Modern Data 101 exists to simplify the journey from raw data to tangible and observable impact. It advocates high-potential data systems and next-gen architectures to unify and activate insights and automation across analytics, applications, and operational workflows at the edge.

In a world shifting from data stacks to AI ecosystems, Modern Data 101 helps teams not just navigate the change but lead it.

Find all things data products, be it strategy, implementation, or a directory of top data product experts & their insights to learn from.

Connect with the minds shaping the future of data. Modern Data 101 is your gateway to share ideas and build relationships that drive innovation.

Showcase your expertise and stand out in a community of like-minded professionals. Share your journey, insights, and solutions with peers and industry leaders.