Access full report

Oops! Something went wrong while submitting the form.

Facilitated by The Modern Data Company in collaboration with the Modern Data 101 Community

Latest reads...

TABLE OF CONTENT

As soon as we lost the Data Management battle to the proliferation of silos and the chaos of cloud shift, awareness hit us hard. That very moment, the conversations in the boardroom changed gears and evolved. Data sipping coffee in the corner meant waste.

Enter Data Activation that echoed, “If data isn't actively making you money or saving you risk right now, it's dead weight and a liability.”

The critical pivot for every serious enterprise is moving from simply reporting on the past to reasoning about the future. This is where the hype of Artificial Intelligence meets the grim reality of the data swamp.

You cannot build a multi-billion-dollar AI agent strategy on data that is fragmented, ungoverned, and stuck in a queue.

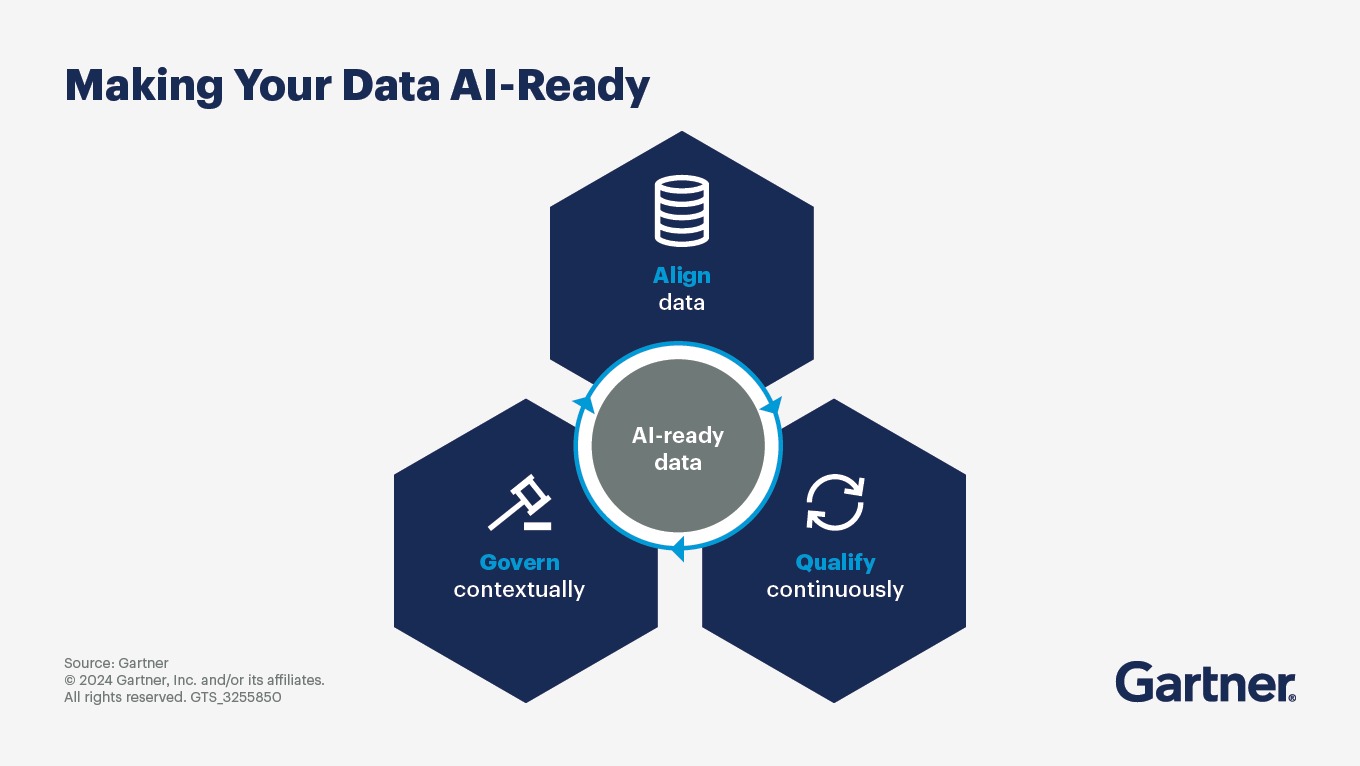

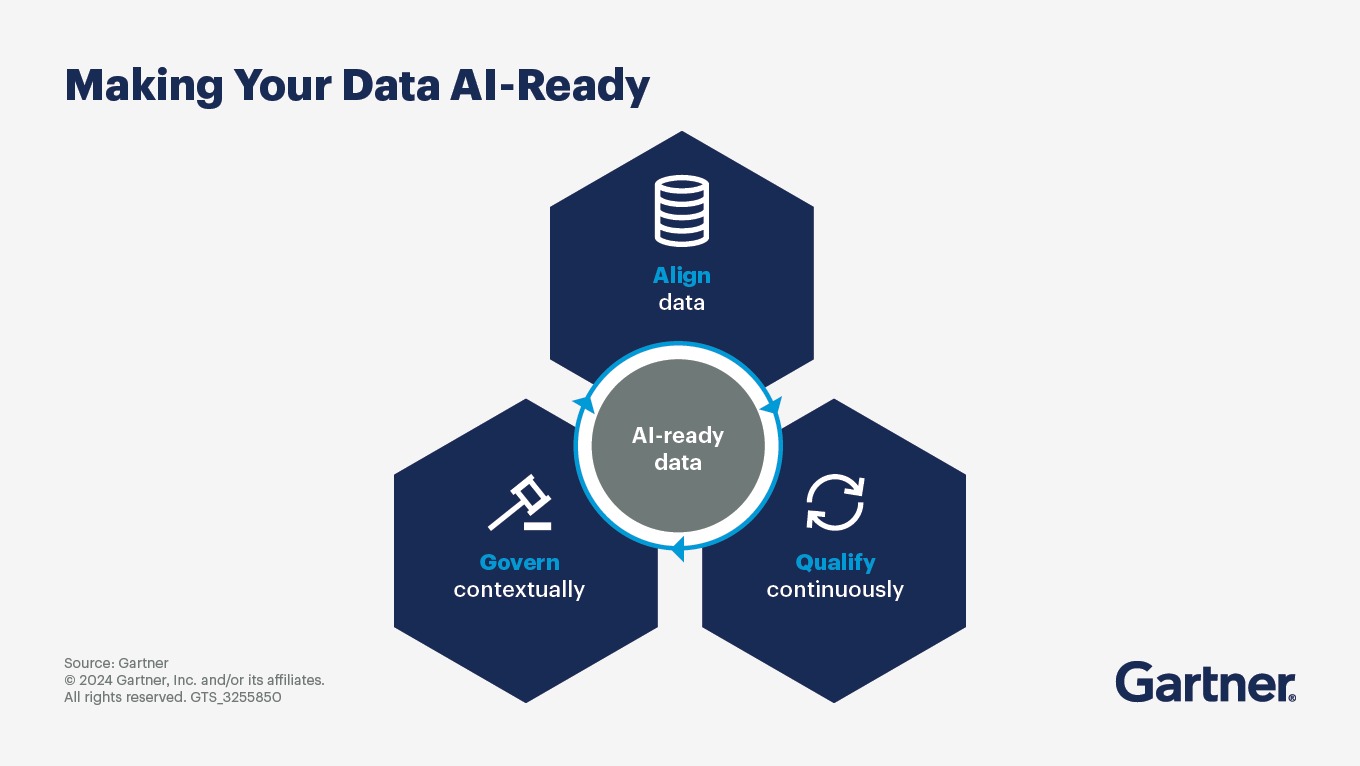

This fundamental requirement asks for AI-Ready Data.

AI-Ready data is data that is structured, contextualised, and semantically enriched such that machine learning or AI systems can directly interpret, reason over, and act on it with minimal additional transformation.

AI-ready data satisfies the following conditions:

[related-1]

Gone are the days when this requirement was a luxury. Today, it is essential for a competitive advantage. If your competitors can onboard AI agents faster and with more accurate data than you, you’ve already lost the game. And this is no exaggeration. Compare a pre-AI era team with one that weilds an army of agents.

But, the question that might give enterprises some chills is, how to bridge the gap between the present state of data chaos and the required business value that wins in this generative intelligence era?

The answer is simple: sincerely commit to Analytics Automation.

This has become the non-negotiable plumbing that bypasses manual processes, and accelerates decisions, guaranteeing a trusted, transparent data flow from raw data to machine precision.

Let’s be fair, we all know the demerits of Data Silos: super slow speed, governance bottleneck, and management becomes a tedious task. Silos are best at breeding uncertainties.

We need Unified Analytics in the very foundation of analytics automation to make AI-readiness sustainable and scalable. And unified analytics is a direct outcome of unified platform architectures.

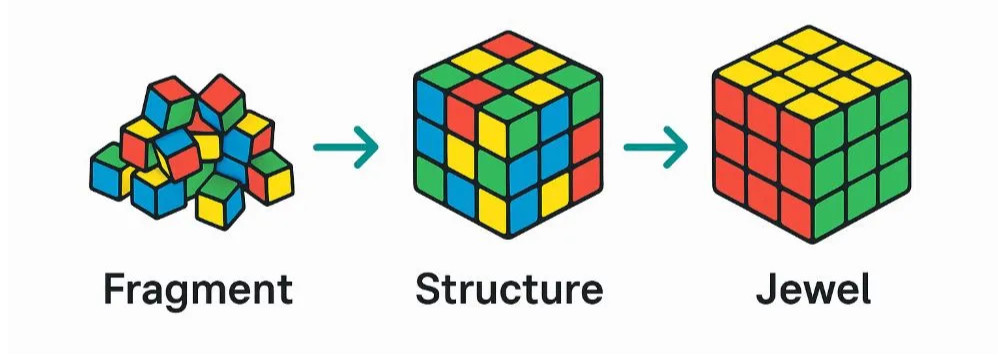

Fragmentation kills AI. Every copied dataset, siloed system, or manual workaround introduces latency, error, and compliance risk. An analytics automation platform enforces a single source of truth, data that isn't just stored, but governed and trusted from the moment of ingestion.

Governance can't be bolted on. It must be embedded, a multi-layered framework covering data lineage, access control, and process auditability. And it must travel with the data, whether it lives on-premise, in the cloud, or across multiple clouds. Not centralised, but unified through a context layer that applies policy consistently, wherever the data resides.

The real purpose of unified analytics is to accelerate decisions. With the consistent, trusted layer, the platform gives you the superpower to automate insights and swiftly turn data into action, thus delivering value that wins.

Before getting into specifics, we must address what is an analytics automation platform.

An analytics automation platform is a system that automatically ingests, transforms, and delivers data as reusable, decision-ready data products for both analytics and AI.

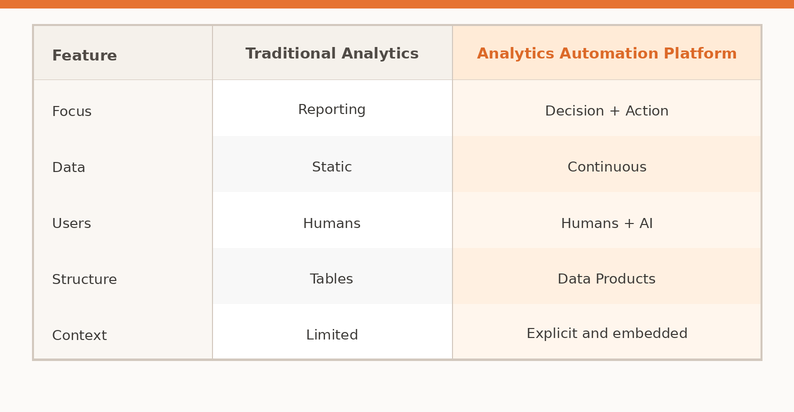

Unlike traditional data stacks that focus on pipelines and dashboards, an analytics automation platform focuses on:

Analytics Automation vs Traditional Data Platforms:

Now, let’s look into the components of an analytics automation platform to enable a better understanding of the build process. Key components include:

[related-2]

You must move beyond individual tools and introduce a control plane: a unifying layer that centrally manages how data flows, transforms, and is governed across the platform. In the context of Data Developer Platform infrastructure standard, the control plane acts as the system’s “brain,” orchestrating workflows, enforcing data contracts, applying quality checks, and managing access and lineage in a consistent way.

Instead of stitching together separate tools for orchestration, governance, and monitoring, the control plane provides a single interface to define, execute, and observe the entire data lifecycle.

The Data Developer Platform Infrastructure Standard provides a full guide on how to automate data pipelines with governance and unify data orchestration and control.

Start by identifying the key business decisions and AI use cases you want to support. Instead of thinking in terms of pipelines or reports, think in terms of outcomes: customer insights, revenue optimisation, risk detection, or operational efficiency. These outcomes will define the data products your platform needs to produce.

Here’s a thorough guide on how to design data products to align data strategy with business goals.

Once use cases are clear, define the core entities, metrics, and relationships that represent your business. This includes creating consistent definitions for key concepts like customers, transactions, revenue, and engagement.

Learn more on the art of creating single source of truth and standardising metrics across teams.

As your platform scales, governance becomes increasingly essential. Implement data quality checks, lineage tracking, and access controls to ensure that data remains accurate, secure, and trustworthy. Without governance, even the most advanced platform will fail to deliver reliable insights or support AI systems effectively.

Once your data is prepared and governed, make it accessible through multiple interfaces: BI dashboards, APIs, and direct integration with machine learning systems. The goal is to ensure that the same data products can be used for both human analysis and automated decision-making. A strong consumption layer ensures that your platform delivers value across the entire organisation, not just within data teams.

Finally, create mechanisms to feed outputs, such as user behaviour, model predictions, and business outcomes, back into the system. This allows your platform to learn and improve over time.

AI models are most quickly and reliably improved with feedback loops or closed-loop analytics systems like the data product lifecycle. This step is what makes your platform truly intelligent. It ensures that data is not just processed and consumed, but continuously refined based on real-world results.

Moving from analytics to intelligence demands more than feeding data to an AI, that's a sandbox, not much of a strategy. Enterprise AI must be woven into the business flow itself.

That starts with good AI orchestration, the layer that manages the full AI model lifecycle, ensuring every model consumes data that is secure, governed, and current.

But the real shift happens when complexity disappears for the business user. An AI-guided automation platform makes this possible, flagging errors, suggesting transformations, and recommending the next logical action at every step of a workflow. Powered by robust connectors across complex enterprise systems, it creates continuous feedback loops that keep intelligence flowing.

Analytics is shifting from reporting to reasoning. GenAI moves beyond predictions, asks a complex question in plain language, and gets a context-aware insight, not just an answer. Decision-making will never look the same again and we all know it.

Repeat, this is already happening. AI Copilots and Assistants are fast becoming the standard interface for data professionals. But there is widespread mistrust and lack of context, which often ends up in poor performances or inability to execute domain-specific complex tasks.

When the analytics automation platform provides these copilots and assistance interfaces natively, it solves thata trust gap and bridges domain context. They automate the repetitive, freeing analysts for strategic work, and bring deep compute power directly to the point of query.

What this unlocks:

The era of democratised, advanced modelling is here.

Turning models into a persistent autonomous agent is the ultimate merit for any Analytics Automation Platform. To achieve this, enterprises need to onboard AI Agents that consume a unified, governed data layer and execute operational decisions autonomously. These agents can automate things like financial processes, supply-chain route optimisation, or even personalise customer experiences, achieving an end-to-end AI development suite.

Intelligence can never be static, with the assistance of native MLops integrated with automation pipelines for continuous delivery and model reliability, the platform must allow for building adaptive, context-aware AI that incorporates new data and is capable of learning from human feedback and real-world results.

Let’s face it, Analytics Automation Platform is an investment, and it must be guarded by its ability to scale and meet any evolving enterprise demands. Meaning scalability is not an option; it is a must-have. The architecture behind analytics automation will act as a booster, allowing you to deploy once and adapt everywhere while maintaining a single, consistent standard for AI-Ready Data across the business.

The future is not about the replacement of human potential but about augmenting and fusing human intuition with machine precision, where copilots validate the analyst’s hypotheses and accelerate modelling while achieving unlimited scalability.

Unified Analytics is set to be the language of domination for enterprises in the future. Next-gen AI has the capability to transform the modern enterprises by guaranteeing that every decision at any scale is powered by governed and contextualised intelligence.

To sum it all up: we have moved past the era of complex, manual data wrangling. The competitive mandate for the future: analytics automation for AI-ready data that stands on non-negotiable pillars of governance, scalability, and generative intelligence.

Analytics Automation the backstage director that helps enterprises evolve to an autonomous, proactive intelligence machinery. Make the move now to accelerate decisions and automate insights to transform your data strategy.

Modern Data 101 is a movement redefining how the world thinks about data. A community built by the same team behind the world’s first data operating system, Modern Data 101 sits at the intersection of data, product thinking, and AI. Spread across 150+ countries, the community brings together a global network of practitioners, architects, and leaders who are actively building the next generation of data systems.

At its core, Modern Data 101 exists to simplify the journey from raw data to tangible and observable impact. It advocates high-potential data systems and next-gen architectures to unify and activate insights and automation across analytics, applications, and operational workflows at the edge.

In a world shifting from data stacks to AI ecosystems, Modern Data 101 helps teams not just navigate the change but lead it.

Find more community resources

Modern Data 101 is a movement redefining how the world thinks about data. A community built by the same team behind the world’s first data operating system, Modern Data 101 sits at the intersection of data, product thinking, and AI. Spread across 150+ countries, the community brings together a global network of practitioners, architects, and leaders who are actively building the next generation of data systems.

At its core, Modern Data 101 exists to simplify the journey from raw data to tangible and observable impact. It advocates high-potential data systems and next-gen architectures to unify and activate insights and automation across analytics, applications, and operational workflows at the edge.

In a world shifting from data stacks to AI ecosystems, Modern Data 101 helps teams not just navigate the change but lead it.

Find all things data products, be it strategy, implementation, or a directory of top data product experts & their insights to learn from.

Connect with the minds shaping the future of data. Modern Data 101 is your gateway to share ideas and build relationships that drive innovation.

Showcase your expertise and stand out in a community of like-minded professionals. Share your journey, insights, and solutions with peers and industry leaders.