Access full report

Oops! Something went wrong while submitting the form.

Facilitated by The Modern Data Company in collaboration with the Modern Data 101 Community

Latest reads...

TABLE OF CONTENT

.avif)

Enterprises are racing against time to become AI-driven “before others”, but that’s easier said than done. However, data accessibility has emerged as a core capability, which offers a pretty good idea of how quickly teams can convert raw data into intelligence, automation, and business value.

🎢As per IBM Data Differentiator, 82% of enterprises reported that data silos disturbed their critical workflows. Also, 68% of the total enterprise data remains unanalysed.

They need the ability to turn domain data into data products, expose them through governed interfaces, and make them available to both humans and AI systems on demand.

What needs to be understood is that this shift requires more than system improvements. When enterprises embrace accessibility as a product, they ensure faster decision-making, enhanced trust, and accelerated AI adoption.

In this one, we will explore some of the most critical aspects of data accessibility and how the right kind of data platform eliminates its loose ends.

Data accessibility is the ability for systems and AI agents to discover, access, understand, and use data seamlessly. It’s important to prioritise making data usable.

As AI systems become first-class consumers, organisations need to evolve toward AI-ready data access. This includes feature retrieval, vector access for search, as well as semantic use cases, not to mention a tight integration with model-serving environments. Without all of this in check, even the most capable AI initiatives face issues such as low accuracy, delays, and engineering bottlenecks.

When enterprises create a strong data accessibility foundation, they also get a series of benefits that compound with time:

Accessibility also helps in improving trust. When quality signals, metadata, quality, and lineage are readily available, business teams get accurate reports, and AI teams spend less time on validating input. This builds a culture where data turns into a shared asset instead of a point of friction.

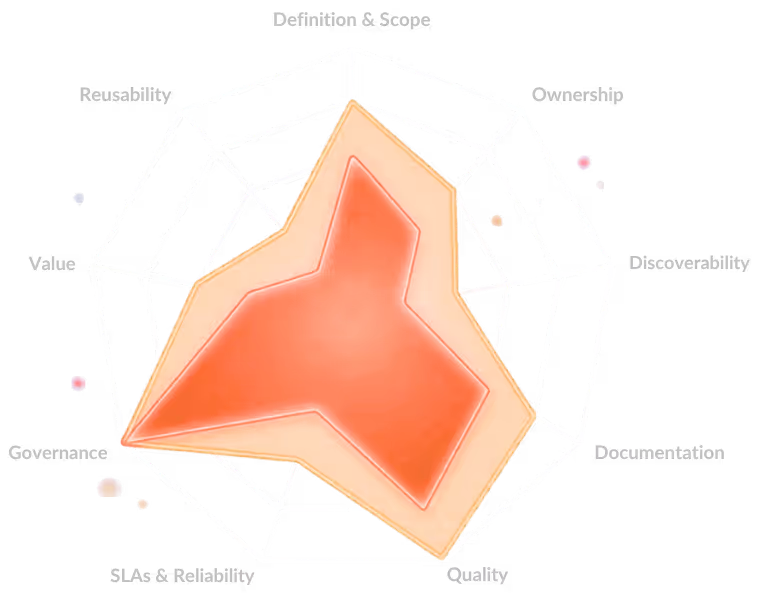

Even with growing awareness, organisations still find it difficult to deal with enterprise data accessibility because their systems are heavily driven by legacy, are fragmented, and are not designed for easy programmatic access. A significant gap here is the lack of data productisation, giving users inconsistent, raw, and difficult-to-use assets.

.avif)

Things also become difficult when there’s no unified, developer-friendly platform to standardise access, or when data quality issues keep increasing. Delays in approval processes also end up making governance a blocker.

[state-of-data-products]

For data leaders, the objective is simple: translating data into assessable business impact. However, this objective requires a different thought to accessibility from a leadership and operating model perspective.

.avif)

Here’s how this can be brought into effect:

It should have definable metrics, such as speed to access, adherence to compliance, reduction in cycle times, and improved product delivery. Data accessibility should be intentional, instead of incidental.

Cutting down centralised dependency and shifting to domain control and self-service analytics, which is also supported by a strong platform. That’s because when accessibility depends on manual processes, effective scaling becomes impossible.

Measurement of the right metrics, such as lead time, request volume, end-user satisfaction, data reuse, and the downstream impact on AI and product delivery.

Data might be technical, but it needs to be usable across all business levels. This also requires clean interfaces, more brilliant discovery, and documentation-rich data products that are consumable without deep engineering knowledge.

A Data Developer Platform (DDP) is a self-service platform that’s designed to offer developers, analysts, and AI teams secure, governed, and quick access to organisational data. It takes away complexity so that teams don’t have to understand underlying infrastructure, compliance rules, or other architecture details.

Here’s how the platform optimises access to data and business teams in organisations.

Instead of ad-hoc approvals, create platform-driven workflows where users can sequentially discover, request, approve, and use. It helps to reduce access lead time, accelerates AI development and analytics, and lowers the risk of unauthorised workarounds.

A single interface should expose all data sources, vector databases, models, and data products. Access policies should also be packaged with the asset, and not remain tied to underlying systems. This cuts down maintenance burden and elevates the onboarding pace for new use cases.

[related-1]

Static roles and manual reviews do not scale well in AI-powered organisations. Policy-as-code enables a dynamic rule evaluation mechanism for compliance, security, and privacy. This also reduces governance costs and enables thousands of users and AI agents to access data safely.

Lineage, context, data contracts, and quality signals should be embedded within the access experience. It greatly reduces the time users spend searching for and validating data, boosts trust, and also improves the success rate of analytics and AI initiatives.

.avif)

Before users can request or access data, they need to know it exists. A data product registry acts as the searchable storefront of your DDP, surfacing available datasets, models, APIs, and data products with rich context like ownership, quality scores, usage stats, and lineage. When embedded with AI-enabled search, it shifts discovery from tribal knowledge to self-serve exploration, directly reducing time-to-insight and preventing duplication of datasets across teams.

Data governance should change from restricting accessibility to designing automated safeguards and guardrails. Platform teams focus on enabling, which improves productivity, reduces friction, and increases adoption of AI and data products.

[related-2]

The major shift we witness in data space today is that data is moving from something you query to something that answers you.

Even today, a large volume of data lives in warehouses, lakes, and APIs, but reaching it requires SQL knowledge, the right permissions, the right tools, and knowing the data exists in the first place. Accessibility today is mostly an engineering problem.

Few crucial changes occurring are:

As data becomes more accessible, access control becomes the hard problem. The future is fine-grained, context-aware permissions, instead of just "can user X see table Y" but "can this AI agent, acting on behalf of user X, access this data for this specific task."

For AI to reliably answer questions about data, it needs a business-logic-aware layer above raw tables, something that knows "revenue" means this specific calculation, instead of any column named revenue. Multiple tools are eventually becoming the connective layer between LLMs and trustworthy data. This is underinvested and will matter enormously.

LLMs are collapsing the gap between "I have a question" and "I have an answer." Text-to-SQL, semantic search, and AI agents mean a non-technical person can interrogate a petabyte-scale dataset the same way they'd ask a colleague. The bottleneck shifts from can you query it to can the model understand your schema and intent accurately.

[related-3]

Data accessibility today is about enabling high-velocity teams, scalable governance, and AI-powered decision-making. Companies embedding accessibility into their products, platforms, and AI stack will be able to innovate quickly and ensure stronger logical fortitude.

To stay ahead, organisations will need to invest in:

Accessibility is the foundation of the modern data organisation; those mastering it will surely define the next decade of AI-led growth.

A lot of enterprises struggle with inconsistent ownership of data and fragmented systems, which makes it difficult to offer a secure, unified, and self-service access without burdening data teams.

Better data accessibility gives AI teams quick access to quality datasets, enabling quick iteration by reducing preparation time and providing dependable insights across multiple business use cases.

A lot of accessibility initiatives fail, but the issues aren’t limited to tooling or budgeting, but because of poor change management, lack of clarity in ownership, and lack of alignment between builders and governance teams, which leads to low adoption even with significant technological investments.

Find more community resources

Modern Data 101 is a movement redefining how the world thinks about data. A community built by the same team behind the world’s first data operating system, Modern Data 101 sits at the intersection of data, product thinking, and AI. Spread across 150+ countries, the community brings together a global network of practitioners, architects, and leaders who are actively building the next generation of data systems.

At its core, Modern Data 101 exists to simplify the journey from raw data to tangible and observable impact. It advocates high-potential data systems and next-gen architectures to unify and activate insights and automation across analytics, applications, and operational workflows at the edge.

In a world shifting from data stacks to AI ecosystems, Modern Data 101 helps teams not just navigate the change but lead it.

Find all things data products, be it strategy, implementation, or a directory of top data product experts & their insights to learn from.

Connect with the minds shaping the future of data. Modern Data 101 is your gateway to share ideas and build relationships that drive innovation.

Showcase your expertise and stand out in a community of like-minded professionals. Share your journey, insights, and solutions with peers and industry leaders.