Access full report

Oops! Something went wrong while submitting the form.

Facilitated by The Modern Data Company in collaboration with the Modern Data 101 Community

Latest reads...

TABLE OF CONTENT

.png)

Most enterprises today are not running a unified data platform. They are running a collection.

A vendor made a dozen acquisitions, painted every interface the same shade of blue, and called the result a platform.

Analysts applauded the move, the brochure looked compelling, and then the engineering team discovered that changing a security policy in the ingestion layer still required a manual update in the analytics layer.

This is the state of enterprise data management in 2026, and it costs more than most organisations admit.

In this guide, we break down the five criteria that separate a genuinely unified data platform from an expensive assembly of disconnected tools. Whether you are evaluating your first enterprise-wide platform or questioning whether your current stack is holding your AI ambitions hostage, this guide gives you the evaluation framework you need.

[state-of-data-products]

Vendors today sell the dream of a Single Pane of Glass: one dashboard, one login, one contract. They acquire a dozen different tools, align the visual branding, and call it an integrated data platform.

But a Single Pane of Glass is often just a window into a messy room.

As the Modern Data 101 community has documented in depth, this trend is not a platform evolution, but a defensive consolidation strategy masquerading as unification. Vendors like Databricks and Snowflake are acquiring open-format companies (Tabular for Iceberg governance, Crunchy Data for Postgres) not because they want to deliver architectural purity, but because they want to control the gravity of your data before it’s too late to grab the market share.

The test is simple: if you are manually syncing security policies between your ingestion and analytics layers, or recreating user roles separately in two tools from the same vendor, you do not have a platform. You have a digital facade.

In the age of the agentic enterprise, AI agents cannot reason across systems that fail to share a common logic layer. They cannot guess that Client_Code in System B is the same entity as ID_01 in System A. A fragmented platform does not just slow down analysts, but breaks AI efforts with downstream fatalities.

[related-1]

Before diving into the detailed validations and evaluations, here’s a quick checklist for you to steal and play off from when evaluating your next data platform vendor.

The biggest high-stakes delusion in enterprise data management is that a shared login equals shared logic.

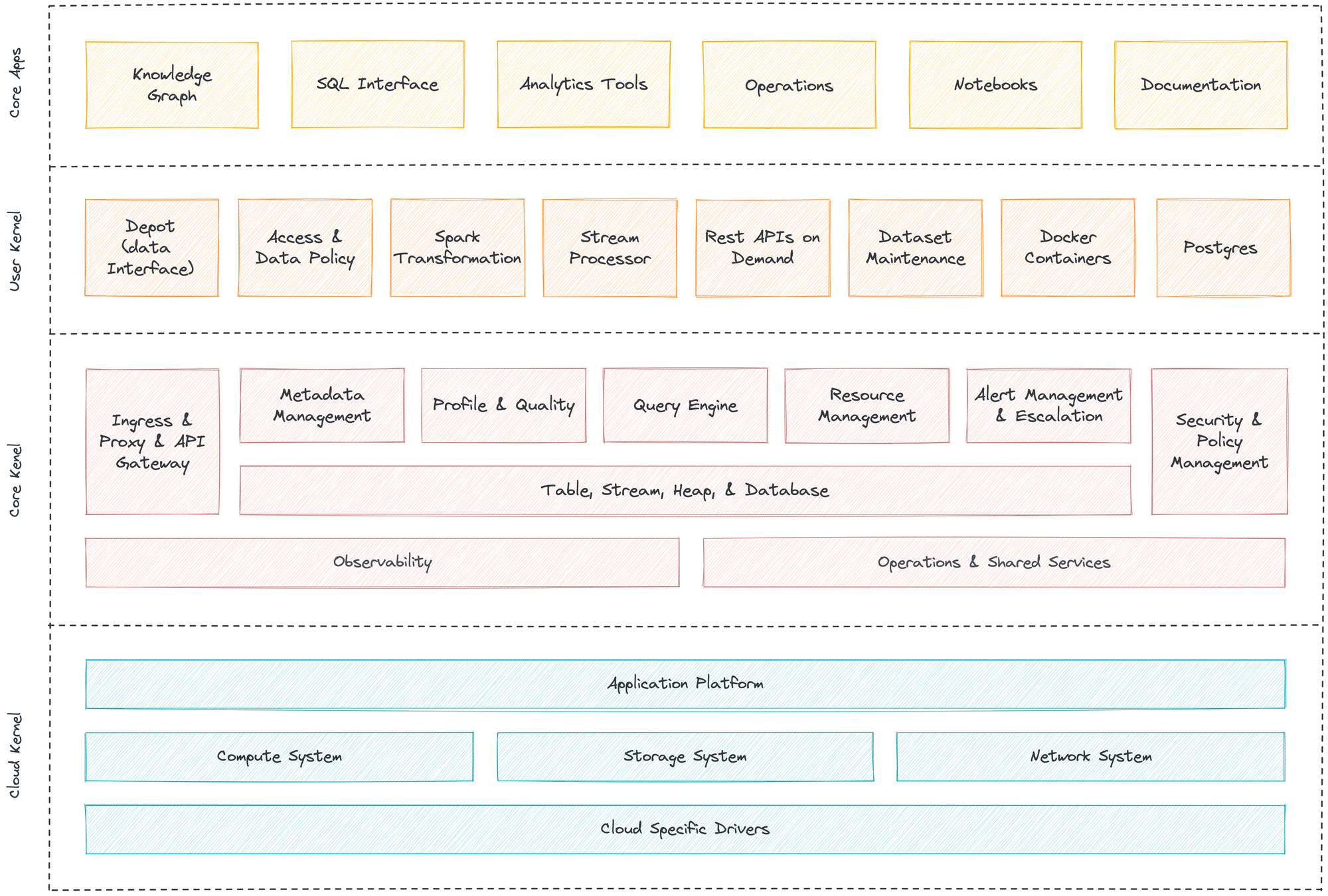

A truly unified data platform shares a single DNA for security, metadata, and storage: what practitioners call the Single Kernel of Truth.

Think of it like the operating system of a smartphone. When you grant location permission on your phone, every integrated app understands that permission immediately. You do not negotiate separately with the GPS app, the Maps app, and the Weather app.

If your enterprise data platform requires a "sync" or a "bridge" to propagate a policy change from one layer to another, you are not running an operating system. You are running a souvenir shop of disconnected tools.

How to test this in a vendor demo:Ask the vendor to change a security policy in the ingestion layer and demonstrate, in real time, how it is reflected in the analytics layer: without any manual sync step. If they cannot do this, the architecture is NOT unified at the kernel level.

For a deeper look at what a Single Kernel architecture looks like in practice, the Data Developer Platform standard provides an open framework that defines what true architectural unification requires, including a Central Control Plane that anchors security, metadata, and governance across the full data product lifecycle.

[related-2]

A data platform without a native semantic layer is like a city where every building runs its own private generator.

In a poorly designed power grid, each building owns a private generator. When demand grows, they buy bigger machines. The result is inefficiency, duplication, and single points of failure. A modern city runs a unified power grid: plug in anywhere and the electricity arrives at a standard voltage.

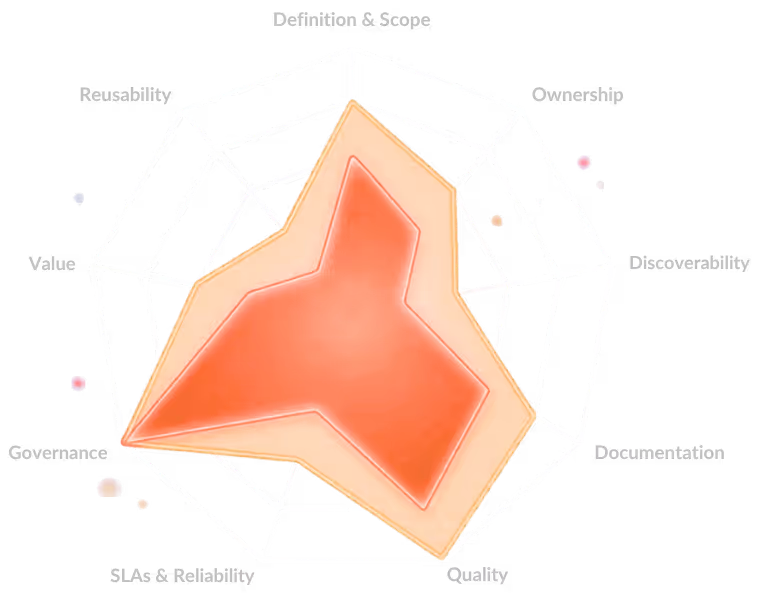

The semantic layer is the power grid of the data platform. Without it, every team in the organisation builds their own private definition of "Revenue," "Customer," or "Churn." This means analysts spend more time reconciling definitions than running analysis, and AI agents cannot trust that two data products mean the same thing when they use the same term.

As Frances O'Rafferty suggests, a well-implemented semantic layer does three things a siloed stack cannot:

The evaluation question: Can you define a business metric once in the platform and have it automatically honoured across every downstream tool, BI report, and AI agent, with no duplication of logic?

[related-3]

In 2026, a governance platform that sends alerts is not governance. It is a help desk ticket with extra steps.

Traditional data platforms were built to alert. A pipeline ingests data that violates a PII policy, and somewhere a notification fires. A human then reviews the alert, investigates the pipeline, and takes corrective action. Hours or days later.

This model worked when data moved slowly and compliance requirements were narrow. Neither is true today.

Gartner's research on active metadata, formalised in its Market Guide for Active Metadata (2021) and reinforced by the 2025 Magic Quadrant for Metadata Management Solutions, defines the shift clearly: governance must move from passive documentation to active, always-on policy enforcement.

The goal, per Gartner, is platforms that detect alignment exceptions between data as designed and data as it actually exists, in real time.

A platform with Programmable Governance does not alert you that a data contract has been violated. It kills the offending job before the violation propagates downstream. This is the distinction between a smoke alarm and an automated sprinkler system. The sprinkler does not wake you up while the house burns. It acts.

Practical evaluation questions to ask vendors:

Organizations that get this right are not just reducing compliance risk. Alation's State of Data Culture Maturity research found that 89% of organisations with strong data leadership met or surpassed their revenue goals in the past year: a signal that mature, trust-based data governance is a business performance driver, not just a regulatory checkbox.

The ultimate proof of a unified data platform is not how it stores data, it is how it packages data for the world.

In a fragmented environment, building a Data Product is artisanal craft. An analyst writes a query. An engineer builds a pipeline. A governance team applies policies manually. A documentation author writes a data dictionary. Each step is done by a different person in a different tool. And when the underlying data changes, the whole chain breaks.

A genuinely unified platform provides what the Modern Data 101 breakdown of the complete data product lifecycle calls the Factory Floor: an end-to-end environment where the data product (its schema, logic, access policies, quality contracts, and versioning) is treated as the native unit of work, not an afterthought assembled from parts.

The critical distinction: a storage platform has data. A unified data platform ships data as governed, versioned, production-ready products.

For enterprises building toward agentic AI, this distinction is existential. AI agents require context, not just access. Data products provide that context in a portable, deployable form.

What to look for: Does the platform let you bundle data, business logic, access policies, and quality SLOs into a single deployable artifact? Can that artifact be versioned, monitored, and reused without manual re-engineering? If not, you are paying for a very expensive storage unit.

Explore how unified platforms operationalise the data product lifecycle as a standard at DataOS and the Modern Data Company, whose architecture is explicitly designed around the data product as the central unit of value.

[related-4]

Your data has gravity. Once it enters a proprietary format, the cost of escape grows exponentially with every passing quarter.

The "Safe Bet Fallacy" is one of the most expensive decisions in enterprise data strategy. It goes like this: staying within one vendor's ecosystem feels simpler. One contract, one support line, one throat to choke.

So organizations consolidate everything onto a single proprietary platform and discover, two years later, that migrating away from it would require rebuilding every pipeline, every semantic model, and every integration from scratch.

This is the black hole effect: not genuine unification, but enclosure. Layers stitched together just enough to tell the story of a unified stack while interoperability across ecosystems disappears.

The corrective is not to avoid consolidation. It is to demand Escape Velocity: the ability to port, extend, and evolve your architecture without paying an exit tax.

.png)

A truly modern unified data platform is opinionated about governance and logic, but agnostic about where data lives. It should natively embrace open interoperability standards:

As the Lakehouse 2.0 architecture suggests, the arrival of open table formats broke the original lock-in pattern, but vendors are actively re-enclosing these formats through acquisitions. Iceberg managed inside Databricks' walls is not the same as truly open Iceberg.

The evaluation question: Does the vendor's support for Iceberg, Delta, or OpenLineage allow you to switch compute engines without re-ingesting data? If the answer is no, or if it requires a paid connector to achieve, the "open standards" claim is marketing, not architecture.

You want to own the blueprint. Not just rent the building.

[related-5]

If your unified data platform does not reduce your organisation's decision latency, the time between a business question arising and a trusted answer being available, you are not interacting with a platform. You are interacting with expensive overhead.

Stop shopping for a Single Pane of Glass. Start applying the five criteria above, and look for a Single Kernel of Truth.

Want to go deeper? Explore how unified platforms and AI agents work together across domains, or read the full architectural standards for data product-native platforms at datadeveloperplatform.org.

[data-expert]

A unified data platform is an integrated system in which security, metadata, governance, and storage share a common logic kernel, meaning a policy defined once is enforced everywhere, automatically. A data warehouse is a storage and query layer; it stores and retrieves structured data efficiently but does not govern logic, enforce contracts, or orchestrate data products across the enterprise. A unified platform is the floor, the ceiling, and the operating system. A data warehouse is one room inside the building.

Run the Single Kernel Test: ask the vendor to change a security policy in the ingestion layer and demonstrate its real-time propagation to the analytics layer without any manual sync step. Ask whether governance policies can be defined as code (data contracts) and enforced programmatically at the source. Ask which open standards (Iceberg, Delta, OpenLineage) are supported natively, and whether compute engines can be swapped without re-ingesting data. If a vendor hesitates on any of these, the integration is cosmetic.

Because AI amplifies the cost of a bad data foundation. AI agents require consistent semantic definitions, governed data products, and trustworthy lineage to function at scale. A proprietary data platform that locks your metadata, formats, or governance logic inside its own walls means your AI stack is equally imprisoned. As your AI ambitions grow, the exit cost grows with them. Exponentially.

A semantic layer is the logic layer that defines what business terms mean ("Revenue," "Customer," "Churn") and enforces those definitions consistently across every tool, report, and AI agent that accesses your data. Without a native semantic layer, each team builds its own private definition, which leads to conflicting reports, broken dashboards, and AI agents that cannot reason across domains. A unified platform delivers a semantic layer as a first-class citizen, not a plug-in.

A data contract is a machine-enforceable agreement between a data producer and a data consumer that specifies the schema, quality SLOs, PII policies, and access rules for a given dataset. On a truly unified platform, data contracts are enforced at the source, meaning a pipeline that violates a contract is terminated before the violation propagates, rather than generating an alert for a human to investigate after the fact. This is the difference between programmable governance and an alarm system.

Find more community resources

Modern Data 101 is a movement redefining how the world thinks about data. A community built by the same team behind the world’s first data operating system, Modern Data 101 sits at the intersection of data, product thinking, and AI. Spread across 150+ countries, the community brings together a global network of practitioners, architects, and leaders who are actively building the next generation of data systems.

At its core, Modern Data 101 exists to simplify the journey from raw data to tangible and observable impact. It advocates high-potential data systems and next-gen architectures to unify and activate insights and automation across analytics, applications, and operational workflows at the edge.

In a world shifting from data stacks to AI ecosystems, Modern Data 101 helps teams not just navigate the change but lead it.

Find all things data products, be it strategy, implementation, or a directory of top data product experts & their insights to learn from.

Connect with the minds shaping the future of data. Modern Data 101 is your gateway to share ideas and build relationships that drive innovation.

Showcase your expertise and stand out in a community of like-minded professionals. Share your journey, insights, and solutions with peers and industry leaders.